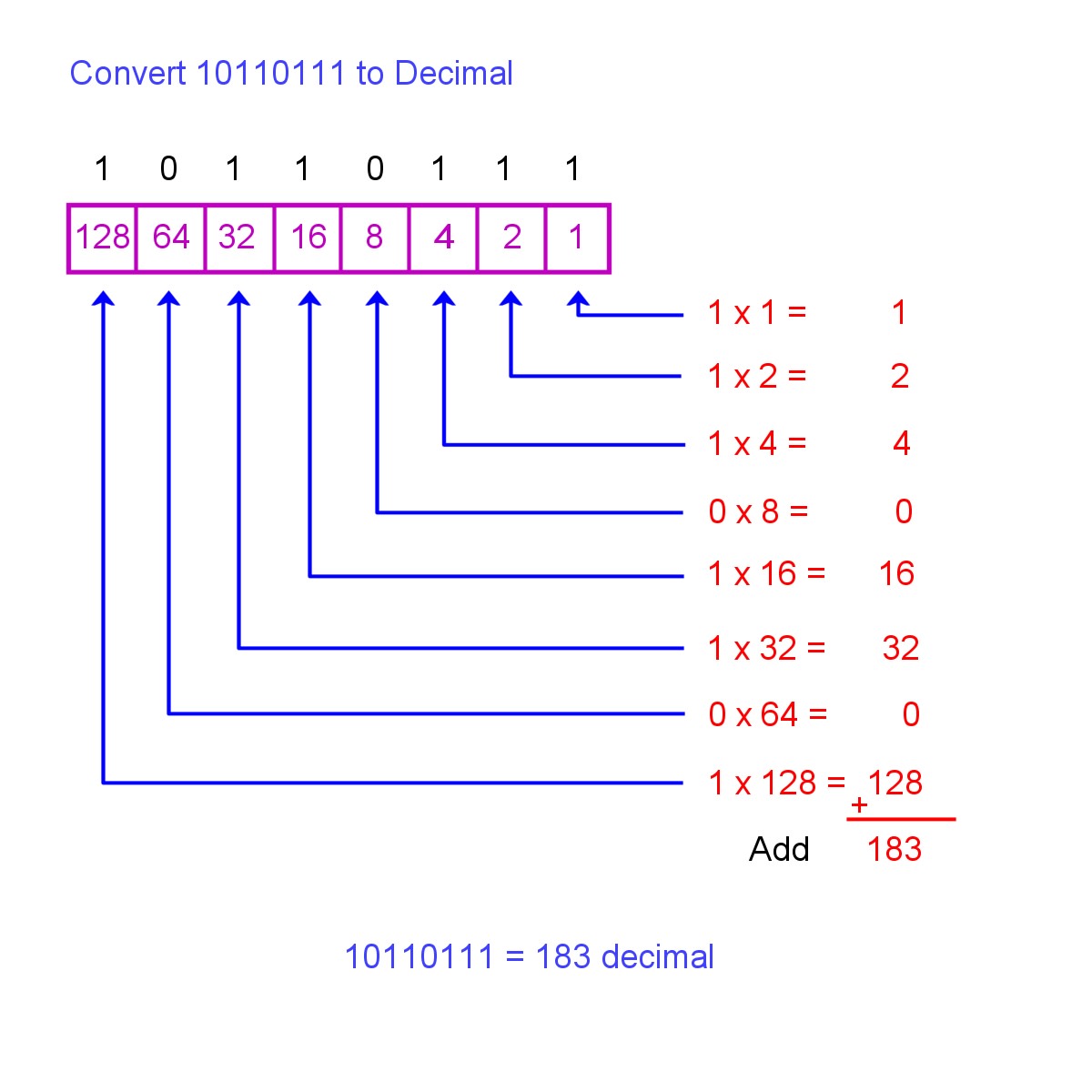

The other strategies sound like fun to try to implement and I will certainly try them out! I do have a small FPGA to fit all in to and will certainly need to see other implementations that don't eat up all my space. I still have a way to go to understand the full extent of what for loops do in HDL. Yeah, I noticed that something was off with this implementation since this module alone increased the total use of LUT's to about 4 times the number of LUT's available, while the rest for my implementation (which uses much more code and is what I consider more "advanced" logic) only took up a combined 50-60% (I use a TinyFPGA A1). So try making tests and consider different types of pipelining.įirst of all: Thanks for such an elaborate answer. 'd100 -> 'h100 (thats how BCD expansion works, no?) This design can be pipelined, but this is for slow updating numbers and we add 3 to cause the overflow next shift. overflow the next shift if the nibble has more than 5. As we shift the input bits to a scratch register we notice that we want to to the next, however binary numbers can store up to 15 in a nibble. The idea is as follows: in Bcd each nibble (4 bits) should overflow at 10 latch the output from o_bcd when o_valid is high Protocol: Input data any time o_busy is low and i_rst is low module to convert an IW length Bin number to BCD, taking IW clocks. The pipelined of course would have a valid out (as it would take time to propogate through), but it wouldnt need a busy flag. I use one working register, and have output signal to tell the instantiate when I am done. Here's an example of the serial (not pipelined) method that I mentioned. Balancing power / area /throughput / latency / timing. These are the kinds of thoughts you need to go through as a digital designer. You could then maybe figure out a hybrid of, let me do 3 or 4 steps per clock, as you could still meet timing easily, but it will reduce my latency in clock cycles. It will have a lot latency, but it will still have a throughput of one. This will have a big footprint, like the original design, but it does much less per clock so it will make timing more easily. So in each stage you take data, do a shift and an add, and then register it into the next set of flops. Of course, then your design will have a latency of N clocks, and a throughput of 1/N clocks (as it will be busy otherwise).Īnother idea would be to pipeline your design so you make N registers, N shifts, and N adders. You can then implement the whole circuit with one adder, one shifter, and one wide register. so each clock you do one shift, and add (for each of the N binary digit inputs). You could explore other strategies, like taking N clocks to compute your answer. This can make a really big circuit, thats slow and hard to meet timing with (as you increase your param sizes). Therefore, I need to make a computational tree and make nodes to essentially compute each intermediate decimal value. The synthesizer will see your code and say -> In each iteration of this loop decimal depends on the value of it in the previous iteration. Understand that your code eventually needs to get synthesized into hardware. So you are doing a double dabble algorithm. I am quite new to HDL and especially Verilog so all kinds of critics (hopefully constructive) are very welcome. This results in the "decimal" output to be either 0 or 1, but never anything higher. The idea is that 4 and 4 bits in the register output "decimal" will contain the value of each spot in a decimal number. Here are my attempt: module binary_to_decimal I want to use this for numbers of different sizes and therefor i tried to make it "generic". So i am trying to create a binary to decimal converter and are following the instructions on this site:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed